Talk:Stirling's approximation

| This It is of interest to the following WikiProjects: | |||||||||||

| |||||||||||

Daily pageviews of this article

A graph should have been displayed here but graphs are temporarily disabled. Until they are enabled again, visit the interactive graph at pageviews.wmcloud.org |

|

This page has archives. Sections older than 365 days may be automatically archived by Lowercase sigmabot III when more than 10 sections are present. |

Gosper's approx[edit]

I saw this on the OEIS wiki, it appears superior to Stirling's. Ref Bill Gosper and OEIS article. Its form is nearly identical.--Billymac00 (talk) 15:44, 15 August 2014 (UTC)

- That just moves (an approximation of) the factor into the square root, doesn't it? See Stirling's approximation#Speed of convergence and error estimates. — Chrisahn (talk) 11:50, 2 August 2021 (UTC)

\sim vs. =[edit]

An editor has recently replaced two copies of asymptotic equality with actual equality in non-convergent asymptotic series. Since the series don't converge, surely = is wrong; but somehow \sim misses the point, too (it is a much weaker statement). What are the usual notations used for asymptotic series like this? --JBL (talk) 00:46, 29 April 2015 (UTC)

Graphic quality[edit]

A number of the images on this page are very "grainy" while others are quite clear. Any particular reason for this? 2620:117:C080:520:5E26:AFF:FEFE:8DBC (talk) 19:31, 16 May 2015 (UTC)

Reference for Convergent Version[edit]

Can we add a reference or a proof for the convergence of the convergent version of Stirling's series given using inverted rising factorials? -Chinju (talk) 17:39, 30 July 2015 (UTC)

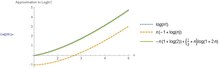

Faster convergence for 'integral' Derivation of ln(n!) by switching bounds[edit]

The "Derivation" section uses the integral:

Would it be useful noting that changing the bounds to gives an even tighter fit? In other words

(See plot below)

I realize this makes no difference for larger n, but it makes a difference for smaller n.

Prheenan (talk) 15:41, 14 January 2016 (UTC)

New figure for relative error[edit]

Glosser.ca recently added this figure showing the relative error of Stirling's approximation, replacing this older figure. Obviously the new figure is more attractive, except for one issue: there are "kinks" in two of the curves. This suggests, falsely, that these two approximations get abruptly better, then worse again, and this is not the case. I want to suggest that the figure should be changed to avoid this issue. Glosser.ca, are you willing to do this?

(The cause of the kinks is that the figure actually shows relative error as an approximation of Gamma(n + 1). Some of the approximating series begin as under-estimates and later become over-estimates, and so by continuity they actually are equal to the gamma function at some point. Thus, the relative error at those points is 0, and the log relative error goes to negative infinity, and we see kinks. I think the "right" solution is to interpolate the relative error, rather than interpolating the factorial function and then taking the relative error of that. I am open to other opinions.)

--JBL (talk) 16:24, 25 April 2016 (UTC)

- Joel_B._Lewis is exactly right and I had considered this when I constructed the new figure (though not your interpolation solution). Because the (continuous) approximation is largely an approximation *to* the (continuous) gamma function which conveniently also fits factorials, I'm inclined to say we keep the kinks, comment on them in the figure caption, and re-work the corresponding section to use the gamma function instead of factorials. Alternatively, interpolating the relative error should be really straight forward, or I can just make the plot discrete due to the discreteness of factorials (though this may not look as nice as the interpolation thing). Glosser.ca (talk) 17:51, 26 April 2016 (UTC)

- Thanks for your response. I am happy with your suggestion (implemented by David Eppstein) to keep the kinks, with a short explanation. (I should have thought to do this myself.) I do not think it is neccesary to rewrite the section, as the relationship with the gamma function is treated in the immediately following section. --JBL (talk) 22:31, 26 April 2016 (UTC)

Big-oh to big-theta in intro[edit]

The intro starts with the most common form used in many applications,

It would be more precise to use $\Theta(\ln n)$ here instead of $O$, although both are correct. Any objections before I go ahead and change this? — Preceding unsigned comment added by Cstanford.math (talk • contribs) 17:21, 5 November 2020 (UTC)

- Seeing as there was no objection, done now. Caleb Stanford (talk) 00:52, 8 November 2021 (UTC)

- I object. No matter what one does, any `approximation' consisting of finitely many terms could be deemed not precise enough. Stirling's formula is really an asymptotic series with infinitely many terms. (See e.g. Ambramowitz and Stegun). What I dislike about the Big Theta notation is that it requires the reader (many who may just be calculus students who need to apply the root test) to learn Big Theta notation. This seems inappropriate for the lead. It makes it more technical than necessary. Lost-n-translation (talk) 12:30, 4 December 2022 (UTC)

- "is really" Well that to me looks like a rather idiosyncratic position. Every time in my life I have ever written "by Stirling's approximation", I have meant something no more precise than . (This is not to say that no one has ever used the same name for the infinite series, just that it is much more common for it to be used as described in the lead of the article.) JBL (talk) 17:20, 4 December 2022 (UTC)

- But do you use Big Theta notation? (I am not trying to troll here. Just trying to be a mathematician.) Anyway, I was simply answering Caleb Stanford's assertion that Big Theta makes the approximation `more precise'. Of course what he meant by making it `more precise' was that he essentially adding a further term in the expansion. Instead of he wanted to have . It is more precise, but it seems arcane to me (and of course unsourced!). Lost-n-translation (talk) 22:00, 4 December 2022 (UTC)

- Separately: When I first came to the page, I expected that the lead would begin with what you wrote . Instead it begins with the unsourced estimate given in terms of Big Theta notation. Of course, we know that the given approximation is less precise than the one that you suggest and that I expected. Anyway, I didn't really want to get into this at all: My first edit on this page was simply adding a user friendly version of the big O estimate. I didn't want to get into a debate. I believe in a big tent. But David Eppstein successively reverted my addition, and now I am invested in seeing this page improved. Lost-n-translation (talk) 22:14, 4 December 2022 (UTC)

- As for idiosyncrasy: The first reference on this page is a very well-known classical book of Abramowitz and Stegun. I looked at this reference and it includes many terms past the usual one. (Does characterizing my position as `idiosyncratic' constitute trolling? Of course, I don't know that you really understood my position, because I too expected the usual first few terms...) Lost-n-translation (talk) 22:24, 4 December 2022 (UTC)

- "is really" Well that to me looks like a rather idiosyncratic position. Every time in my life I have ever written "by Stirling's approximation", I have meant something no more precise than . (This is not to say that no one has ever used the same name for the infinite series, just that it is much more common for it to be used as described in the lead of the article.) JBL (talk) 17:20, 4 December 2022 (UTC)

- I object. No matter what one does, any `approximation' consisting of finitely many terms could be deemed not precise enough. Stirling's formula is really an asymptotic series with infinitely many terms. (See e.g. Ambramowitz and Stegun). What I dislike about the Big Theta notation is that it requires the reader (many who may just be calculus students who need to apply the root test) to learn Big Theta notation. This seems inappropriate for the lead. It makes it more technical than necessary. Lost-n-translation (talk) 12:30, 4 December 2022 (UTC)

valid-for-all-n bounds[edit]

user 2a02:ab88:370b:4d80:aac3:4ef7:a57f:df51 @Chrisahn: Bringing this discussion here. To whoever made the recent edit, can you please make an account and not edit anonymously?

I happen to agree with including this, actually. I think it is useful to comment on the fact that you can have a bound valid for all n and not just asymptotically. The included bound is indeed valid for (including real n). We could, however, add a comment that this is slightly less precise, particularly the upper bound as it has instead of . We could also instead include the Robbins bound and place the discussion of derived/simpler bounds there. Caleb Stanford (talk) 16:33, 2 November 2021 (UTC)

- Why can't we get a valid-for-all-n bound that at least has the correct leading coefficient? This one is too inaccurate. —David Eppstein (talk) 17:02, 2 November 2021 (UTC)

- User:David Eppstein Mentioning the full bound with the correct leading coefficient sounds good to me, but that factor is definitely annoying if I want something simpler. Is there a bound valid for all n that doesn't have the additional factor? is not valid for , so the simplest solution I can think of is the one proposed, with replaced by . Caleb Stanford (talk) 20:49, 2 November 2021 (UTC)

- @Caleb Stanford and David Eppstein: My reasons for removing the formula: For numerical applications, it's probably too imprecise. It may be useful in some proofs, but there are many other formulas that may be just as useful (depending on the proof). Which ones should we include in the article? If this particular formula is actually used in the literature, we could probably include it, but if it's only used occasionally, it shouldn't be in the lead, and if it's rarely found in the literature, it shouldn't be in the article at all. — Chrisahn (talk) 19:33, 7 November 2021 (UTC)

- @Chrisahn: Thanks! I fully agree with that criteria for whether or not to include a bound. Based on the discussion I included the Robinson bound in the lead as an example of a valid-for-all-n bound, and added a small note to the "Speed of convergence" section, thoughts on this? Would also be happy with not mentioning the bound in the intro, but only mentioning that valid-for-all-n bounds do exist. Caleb Stanford (talk) 22:18, 7 November 2021 (UTC)

Does the log-base-2 version really belong in the lead?[edit]

As long as we're discussing the lead: does the log-base-2 version really belong here? It follows completely trivially from the previous formula and doesn't add any new information. I would be in favor of moving this note + the comment about comparison sort somewhere else in the article. Caleb Stanford (talk) 22:21, 7 November 2021 (UTC)

- The log-base-2 version is the one that all computer science students learn, because it is the version that is relevant for comparison sorting. For them, at least, it is the natural log that is much less relevant, and you could make the same argument that the natural-log version follows trivially from the log-base-2 version. —David Eppstein (talk) 22:37, 7 November 2021 (UTC)

- I'm not sure about "all computer scientists". The natural log version does happen to be slightly cleaner in this case. My main point is that the two formulas contain the same information so they dilute the information density of the lead. What about just stating: "The change-of-base formula can be used to give a version using the base-2-log, for instance in the worst-case lower bound for comparison sorting." "version using the base-2-log" could link to a section on variations if that would help reach consensus. Caleb Stanford (talk) 23:10, 7 November 2021 (UTC)

- Cleaner, maybe, because of the nicer constant on the linear term, but is there a significant application where a base-specific logarithm formula is necessary and the base is not 2? —David Eppstein (talk) 23:24, 7 November 2021 (UTC)

- To that question, my guess is the answer is no. But IMO it does not justify including essentially the same formula twice. Caleb Stanford (talk) 23:43, 7 November 2021 (UTC)

- Yes, but to my mind if we are to keep only one and the choice is aesthetics versus an important application, the application wins. You have not made any argument beyond aesthetics for keeping the natural log in the lead. —David Eppstein (talk) 00:04, 8 November 2021 (UTC)

- To that question, my guess is the answer is no. But IMO it does not justify including essentially the same formula twice. Caleb Stanford (talk) 23:43, 7 November 2021 (UTC)

- Cleaner, maybe, because of the nicer constant on the linear term, but is there a significant application where a base-specific logarithm formula is necessary and the base is not 2? —David Eppstein (talk) 23:24, 7 November 2021 (UTC)

- I'm not sure about "all computer scientists". The natural log version does happen to be slightly cleaner in this case. My main point is that the two formulas contain the same information so they dilute the information density of the lead. What about just stating: "The change-of-base formula can be used to give a version using the base-2-log, for instance in the worst-case lower bound for comparison sorting." "version using the base-2-log" could link to a section on variations if that would help reach consensus. Caleb Stanford (talk) 23:10, 7 November 2021 (UTC)

- I agree with that assessment, aesthetics is my main argument. Given the choice I would prefer having only log-base-2 to having both. Somehow I expect most mathematicians would be upset with only including the log-base-2 one though. Waiting for a weigh-in from a third party. Caleb Stanford (talk) 00:36, 8 November 2021 (UTC)

- The article begins with `in mathematics' and so this article as an article about mathematics. Pure mathematics is a subject driven by aesthetics, though computer science may be driven by applications. Perhaps there should be a separate article for computer scientists. Lost-n-translation (talk) 12:59, 3 December 2022 (UTC)

- Why not use all bases? In mathematics we use `log' without a base when we realize the base is not important. Lost-n-translation (talk) 12:59, 3 December 2022 (UTC)

- It is difficult to distinguish some of your comments (

Perhaps there should be a separate article for computer scientists

) from trolling. This is an article about some related mathematical theorems that are extremely useful in estimating asymptotic growth; it happens that many people interested in estimating asymptotic growth are working in fields adjacent to mathematics. This situation is ... extremely common. When writing a general-purpose encyclopedia, articles should be written for the broadest reasonable audience. If you want to write an article about Stirling's approximation that is intentionally exclusive of a large part of its potential audience, I guess go ahead, but don't do it here. JBL (talk) 17:25, 4 December 2022 (UTC)- Sorry if I have offended anyone. I was actually quite serious. Why not have a separate page for computer scientists? Lost-n-translation (talk) 21:47, 4 December 2022 (UTC)

- As for the insertions of `citation needed' that you reverted: The entire lead is inadequately sourced. I have gone through the references given and only Mathworld gives the ln(n!) approximation and Mathworld mentions nothing about Big Theta. Lost-n-translation (talk) 21:49, 4 December 2022 (UTC)

- Leads do not generally need to be sourced. The standard convention on Wikipedia is that leads should generally contain material that is explained in more detail later in the article and that only that later expansion needs to be sourced. —David Eppstein (talk) 22:43, 4 December 2022 (UTC)

- The mathematical link to the Big Theta estimate is not explained in more detail in the article. (I know how to get it, but does the typical reader?) In any case, in referring to things as unsourced, I am simply following your example. You initially characterized my original edit as 'unsourced'. That edit was an addition to the lead. Why did you use 'unsourced' as a reason to revert? (Btw the first derivation of the approximation is not sourced.) Lost-n-translation (talk) 22:56, 4 December 2022 (UTC)

- Because it was an addition to the lead that was not material explained in more detail later in the article. —David Eppstein (talk) 23:03, 4 December 2022 (UTC)

- Would you say that that the Big Theta estimate that appears in the lead is better explained in more detail in the article than the addition that I made? Lost-n-translation (talk) 00:43, 5 December 2022 (UTC)

- Are you actually looking for an explanation, or are you trying to live up to the "indistinguishable from a troll" characterization earlier in the discussion? —David Eppstein (talk) 00:48, 5 December 2022 (UTC)

- I'm being quite serious. My only intention all along was to make this page better. I do appreciate that you have now tried to explain the Big theta notation in the lead, but what is the difference between your explanation of Big Theta and an explanation of Big O? Of course Big Theta is mathematically different than Big O, but your explanation in the lead---in my mind---is really an explanation of Big O. Lost-n-translation (talk) 00:59, 5 December 2022 (UTC)

- You misunderstand the purpose of my edit. It was not to "try to explain the big theta notation", although it may have had the effect. There were two other more significant purposes: (1) to remove the dubious and unsourced claim that "the version of the formula typically used in applications" is the one with the natural log (I think the binary log is more typically used, but we don't need to make any claims about what is typical here), and (2) to consolidate your out-of-place repetition of the log-formula in yet another form into the part of the lead with the logarithms, and to recast it as an explanation rather than as yet another formula. —David Eppstein (talk) 01:03, 5 December 2022 (UTC)

- I greatly appreciate (1) and (2). Sorry for not mentioning that. Still, the language in the lead that explains the Big Theta notation does not really explain this notation. Big Theta means whereas Big O means . Note that my original insertion was . The Big Theta stuff makes the lead overly technical. Lost-n-translation (talk) 01:12, 5 December 2022 (UTC)

- My opinion is the opposite: Trying to explain the approximation bounds of the approximation formula by complicated circumlocutions that unnecessarily avoid the simplicity of big O or big theta notation makes the lead more convoluted and overly technical. But again, the language in the lead does not really explain this notation, as you say, because the lead of an article on a different topic is the wrong place to explain this notation. If the readers of this article want an explanation, that's what the link to the article on the notation is for. It's impossible to explain all mathematical notation in the leads of other articles. Perhaps you might not have noticed, but the lead does not explain factorials, logarithms, square roots, pi, e, addition, subtraction, exponentiation, division, or equality either. —David Eppstein (talk) 01:29, 5 December 2022 (UTC)

- So you agree that the current language `...for all sufficiently large values of the difference between...' does not belong in the lead?

- My opinion is that a reader should not have to go to read a technical article on notation to figure out what the first formula in the lead means. The better alternative, in my opinion, is to begin with a classical formula that both JBL and myself and most mathematicians recognize as Stirling's formula and which also is the variant appears in all the references. Then move to where log has no base and with the comment that the base can be anything. Lost-n-translation (talk) 01:38, 5 December 2022 (UTC)

- Are you aware how trollish those sorts of "so you admit you were wrong along" questions make you look, and how strongly that sort of wording discourages any serious attempt to answer them? —David Eppstein (talk) 01:46, 5 December 2022 (UTC)

- But I thought you were saying that you did not add that language that instead you only did (1) and (2). So how could you be wrong? I am so confused. Lost-n-translation (talk) 01:52, 5 December 2022 (UTC)

- Big Theta does is not described in any of the standard calculus texts, whereas factorials, logarithms, square roots, pi, e, addition, subtraction, exponentiation, division, or equality are all described in a standard calculus text. Pre-college mathematics education in most developed countries includes factorials, logarithms, square roots, pi, e, addition, subtraction, exponentiation, division, and equality. I have never see Big Theta in the College mathematics in my 30+ years of teaching mathematics at the university level. Lost-n-translation (talk) 15:54, 5 December 2022 (UTC)

- And yet despite your 30 years in mathematics you remain willfully ignorant of the most basic material taught to freshman computer scientists? Why do you think the calculus curriculum is somehow more relevant to this topic than that? —David Eppstein (talk) 18:18, 5 December 2022 (UTC)

- Big Theta does is not described in any of the standard calculus texts, whereas factorials, logarithms, square roots, pi, e, addition, subtraction, exponentiation, division, or equality are all described in a standard calculus text. Pre-college mathematics education in most developed countries includes factorials, logarithms, square roots, pi, e, addition, subtraction, exponentiation, division, and equality. I have never see Big Theta in the College mathematics in my 30+ years of teaching mathematics at the university level. Lost-n-translation (talk) 15:54, 5 December 2022 (UTC)

- But I thought you were saying that you did not add that language that instead you only did (1) and (2). So how could you be wrong? I am so confused. Lost-n-translation (talk) 01:52, 5 December 2022 (UTC)

- Are you aware how trollish those sorts of "so you admit you were wrong along" questions make you look, and how strongly that sort of wording discourages any serious attempt to answer them? —David Eppstein (talk) 01:46, 5 December 2022 (UTC)

- My opinion is the opposite: Trying to explain the approximation bounds of the approximation formula by complicated circumlocutions that unnecessarily avoid the simplicity of big O or big theta notation makes the lead more convoluted and overly technical. But again, the language in the lead does not really explain this notation, as you say, because the lead of an article on a different topic is the wrong place to explain this notation. If the readers of this article want an explanation, that's what the link to the article on the notation is for. It's impossible to explain all mathematical notation in the leads of other articles. Perhaps you might not have noticed, but the lead does not explain factorials, logarithms, square roots, pi, e, addition, subtraction, exponentiation, division, or equality either. —David Eppstein (talk) 01:29, 5 December 2022 (UTC)

- I greatly appreciate (1) and (2). Sorry for not mentioning that. Still, the language in the lead that explains the Big Theta notation does not really explain this notation. Big Theta means whereas Big O means . Note that my original insertion was . The Big Theta stuff makes the lead overly technical. Lost-n-translation (talk) 01:12, 5 December 2022 (UTC)

- You misunderstand the purpose of my edit. It was not to "try to explain the big theta notation", although it may have had the effect. There were two other more significant purposes: (1) to remove the dubious and unsourced claim that "the version of the formula typically used in applications" is the one with the natural log (I think the binary log is more typically used, but we don't need to make any claims about what is typical here), and (2) to consolidate your out-of-place repetition of the log-formula in yet another form into the part of the lead with the logarithms, and to recast it as an explanation rather than as yet another formula. —David Eppstein (talk) 01:03, 5 December 2022 (UTC)

- I'm being quite serious. My only intention all along was to make this page better. I do appreciate that you have now tried to explain the Big theta notation in the lead, but what is the difference between your explanation of Big Theta and an explanation of Big O? Of course Big Theta is mathematically different than Big O, but your explanation in the lead---in my mind---is really an explanation of Big O. Lost-n-translation (talk) 00:59, 5 December 2022 (UTC)

- Are you actually looking for an explanation, or are you trying to live up to the "indistinguishable from a troll" characterization earlier in the discussion? —David Eppstein (talk) 00:48, 5 December 2022 (UTC)

- Would you say that that the Big Theta estimate that appears in the lead is better explained in more detail in the article than the addition that I made? Lost-n-translation (talk) 00:43, 5 December 2022 (UTC)

- Because it was an addition to the lead that was not material explained in more detail later in the article. —David Eppstein (talk) 23:03, 4 December 2022 (UTC)

- The mathematical link to the Big Theta estimate is not explained in more detail in the article. (I know how to get it, but does the typical reader?) In any case, in referring to things as unsourced, I am simply following your example. You initially characterized my original edit as 'unsourced'. That edit was an addition to the lead. Why did you use 'unsourced' as a reason to revert? (Btw the first derivation of the approximation is not sourced.) Lost-n-translation (talk) 22:56, 4 December 2022 (UTC)

- Leads do not generally need to be sourced. The standard convention on Wikipedia is that leads should generally contain material that is explained in more detail later in the article and that only that later expansion needs to be sourced. —David Eppstein (talk) 22:43, 4 December 2022 (UTC)

- It is difficult to distinguish some of your comments (

- Why not use all bases? In mathematics we use `log' without a base when we realize the base is not important. Lost-n-translation (talk) 12:59, 3 December 2022 (UTC)

Clarification of "Higher orders" section[edit]

Does anyone have a reference for the nice calculation summarised in the "Higher orders" section? I think it was added by @Cosmia Nebula a while back. I just tried repeating the calculation and got a slightly different answer which (annoyingly!) agrees with what is written to the first two orders. However if I go further it doesn't seem to get the next term in the Stirling series correct. A reference would help me figure out whether I have just gone astray somewhere or the article needs amending. ColmConnaughton (talk) 13:51, 11 April 2024 (UTC)